(Content) Moderating a Path Forward

Sections Of The Veto That Damned America:

(Content) Moderating a Path Forward

How The Fairness Doctrine Can Inspire A Revised Section 230

Implementing elements of the Fairness Doctrine into a revised Section 230 would require careful consideration of how to balance the goals of promoting free expression, fostering diverse viewpoints, and addressing harmful content online. While the Fairness Doctrine was originally designed for traditional broadcasting, updating and adapting its principles for the digital age presents both challenges and opportunities. Here are some potential ways elements of the Fairness Doctrine could be integrated into a revised Section 230:

1. Content Neutrality Requirement:

- A revised Section 230 could include a requirement for online platforms to adhere to principles of content neutrality, similar to the Fairness Doctrine's mandate for broadcasters to present contrasting viewpoints. Platforms would be obligated to ensure that their content moderation policies and practices are applied in a neutral and unbiased manner, allowing for diverse perspectives to be heard.

2. Transparency and Accountability Measures:

- A revised Section 230 could include provisions that enhance transparency and accountability for online platforms' content moderation decisions. Platforms would be required to disclose information about their moderation practices, including how decisions are made, what criteria are used, and how appeals are handled. This transparency would promote public trust and confidence in platform governance.

3. Balanced Presentation of Viewpoints:

- While a direct requirement for balanced presentation of viewpoints may not be feasible in the digital context, a revised Section 230 could encourage platforms to promote diverse perspectives and ensure that users are exposed to a range of viewpoints on important issues. This could be achieved through algorithmic transparency and diversity requirements, ensuring that content algorithms prioritize a variety of viewpoints.

4. User Empowerment and Control:

- A revised Section 230 could empower users to control their online experiences by providing tools and features that allow them to customize their content preferences and filter out objectionable or harmful content. Platforms could be incentivized to develop user-friendly tools for content curation and filtering, giving users greater control over the content they encounter.

5. Public Interest Obligations:

- Similar to the public interest obligations imposed on broadcasters under the Fairness Doctrine, a revised Section 230 could require online platforms to fulfill certain public interest obligations, such as combating misinformation, promoting civic engagement, and protecting vulnerable users. Platforms would be incentivized to prioritize public interest goals in their content moderation and platform governance efforts.

6. Independent Oversight Mechanisms:

- To ensure compliance with the revised Section 230 and promote accountability, independent oversight mechanisms could be established to monitor platform behavior, adjudicate disputes, and enforce regulatory requirements. These oversight bodies could include a mix of government agencies, industry stakeholders, and civil society organizations, ensuring a balanced and transparent approach to platform regulation.

It's important to note that any revisions to Section 230 should be carefully crafted to avoid unintended consequences, such as chilling free expression or stifling innovation. Balancing the competing interests of free speech, platform accountability, and user empowerment will require thoughtful consideration and engagement with a wide range of stakeholders, including platform operators, policymakers, civil society organizations, and the public.

Reviving The Fairness Doctrine

Reviving and updating the Fairness Doctrine to address disinformation on cable, satellite, and streaming platforms would require careful consideration of both technological and regulatory challenges. Here are some potential steps that could be taken to modernize and strengthen the Fairness Doctrine for the digital age:

Expanded Scope: The revived Fairness Doctrine could be expanded to cover a broader range of media platforms, including cable, satellite, and streaming services. This would ensure that the principles of fairness and balance apply not only to traditional broadcast networks, but also to digital platforms that have become increasingly influential in shaping public discourse.

Modernization of Standards: The Fairness Doctrine could be updated to reflect the realities of digital media consumption and the proliferation of online disinformation. This could include establishing new standards for fairness, accuracy, and accountability that are specifically tailored to digital platforms and the challenges they present in terms of content moderation and oversight.

Transparency Requirements: The revived Fairness Doctrine could impose transparency requirements on media platforms, requiring them to disclose information about the sources of content, the algorithms used to promote or suppress certain viewpoints, and any financial or editorial relationships that may influence the content they distribute. This would help users to better understand the context and credibility of the information they encounter online.

Public Interest Obligations: Media platforms could be required to demonstrate a commitment to serving the public interest as a condition of operating in the digital space. This could include providing access to diverse viewpoints, supporting local journalism, and promoting civic engagement and media literacy initiatives that help users to critically evaluate the information they encounter online.

Enforcement Mechanisms: The revived Fairness Doctrine would need to be accompanied by robust enforcement mechanisms to ensure compliance and accountability among media platforms. This could involve the creation of an independent regulatory body with the authority to investigate complaints, impose sanctions for violations, and promote adherence to the principles of fairness and accuracy in digital media.

International Collaboration: Given the global nature of digital media and the cross-border dissemination of disinformation, efforts to revive the Fairness Doctrine could benefit from international collaboration and coordination. This could involve working with other countries to develop common standards and best practices for addressing disinformation and promoting responsible journalism in the digital age.

Overall, reviving and updating the Fairness Doctrine to address disinformation on digital media platforms would require a multifaceted approach that takes into account the unique characteristics of online communication and the need for robust regulatory oversight to ensure the integrity and reliability of information in the digital age.

Strengthening Section 230

Strengthening Section 230 of the Communications Decency Act to counter disinformation on social media platforms would require careful consideration of the balance between protecting free speech and holding platforms accountable for harmful content. Here are some potential ways to strengthen Section 230:

Clarify Liability Protections: Section 230 currently provides broad immunity to online platforms for content posted by third-party users. However, there is ambiguity surrounding the scope of this immunity, particularly when it comes to content moderation decisions made by platforms. Clarifying the scope of liability protections under Section 230 could provide greater certainty for platforms while also ensuring that they are held accountable for their actions in addressing disinformation and harmful content.

Conditional Immunity: One approach to strengthening Section 230 could involve conditioning the immunity provided to platforms on their efforts to combat disinformation and promote responsible content moderation practices. Platforms that fail to take adequate measures to address disinformation could face increased liability for the content they host, incentivizing them to invest in effective content moderation tools and policies.

Transparency Requirements: Platforms could be required to provide greater transparency about their content moderation practices, including the algorithms used to rank and promote content, the criteria used to enforce community standards, and the actions taken to address disinformation and harmful content. This would help users to better understand how content is filtered and moderated on social media platforms and hold platforms accountable for their decisions.

Accountability Mechanisms: Strengthening Section 230 could involve implementing mechanisms to hold platforms accountable for their role in facilitating the spread of disinformation. This could include establishing a regulatory body or oversight mechanism with the authority to investigate complaints, impose sanctions for violations of content moderation policies, and promote best practices for addressing disinformation on social media.

User Empowerment: Empowering users to take control of their online experience could help to mitigate the spread of disinformation on social media platforms. This could involve providing users with greater control over the content they see, such as through customizable filters, content warnings, and options to report misleading or harmful content. Platforms could also invest in media literacy and digital citizenship initiatives to help users critically evaluate information online.

International Collaboration: Given the global nature of social media and the cross-border dissemination of disinformation, efforts to strengthen Section 230 could benefit from international collaboration and coordination. Working with other countries to develop common standards and best practices for addressing disinformation on social media platforms could help to mitigate the spread of harmful content online.

Overall, strengthening Section 230 to counter disinformation on social media will require a multifaceted approach that balances the need to protect free speech with the responsibility of platforms to address harmful content. By clarifying liability protections, promoting transparency and accountability, empowering users, and fostering international collaboration, policymakers can work to mitigate the spread of disinformation and promote a healthier online environment.

Digital Citzenship

Digital citizenship refers to the responsible, ethical, and safe use of digital technologies, particularly the internet and social media platforms. It encompasses a range of behaviors, attitudes, and skills that individuals need to navigate the digital world effectively and participate responsibly in online communities. Digital citizenship emphasizes the rights, responsibilities, and opportunities that come with living in an increasingly interconnected and technologically driven society.

Key aspects of digital citizenship include:

Digital Literacy: Digital citizenship involves having the skills and knowledge needed to effectively use digital technologies, including basic computer skills, internet literacy, and proficiency in navigating online platforms. This includes understanding how to search for information, evaluate sources, and critically assess online content.

Responsible Behavior: Digital citizenship entails behaving ethically and responsibly in online interactions and digital spaces. This includes respecting the rights and privacy of others, adhering to community guidelines and terms of service on social media platforms, and engaging in civil and constructive dialogue with others online.

Cybersecurity Awareness: Digital citizenship includes understanding the importance of cybersecurity and taking steps to protect oneself and others online. This involves practicing good password hygiene, being vigilant against online threats such as phishing and malware, and safeguarding personal information and digital assets.

Digital Footprint Management: Digital citizenship involves managing one's digital footprint—the trail of information and data that is created through online activities. This includes being mindful of the content one shares online, understanding the implications of digital privacy settings, and taking steps to curate and control one's online presence.

Media Literacy: Digital citizenship encompasses media literacy skills, which involve critically evaluating and analyzing media content encountered online. This includes understanding the techniques used to create and manipulate digital media, recognizing bias and misinformation, and being able to discern credible sources of information from unreliable ones.

Citizenship in Online Communities: Digital citizenship involves actively participating in online communities in a positive and constructive manner. This includes respecting diverse viewpoints, fostering inclusivity and diversity, and contributing to a culture of empathy, tolerance, and respect in digital spaces.

Overall, digital citizenship is about promoting responsible, ethical, and informed behavior in the digital age. It empowers individuals to navigate the complexities of the online world with confidence, integrity, and respect for themselves and others, ultimately contributing to a safer, more inclusive, and more democratic digital society.

Moderation As a Public Service

The idea of the government taking over the moderation duties of social media platforms is complex and raises significant questions about free speech, government control, and the role of private companies in shaping online discourse. However, if such a scenario were to be considered, here are some potential approaches:

Creation of a Government Agency or Oversight Body: The government could establish a dedicated agency or oversight body responsible for overseeing content moderation on social media platforms. This agency would be tasked with developing and enforcing content moderation policies, investigating complaints, and holding platforms accountable for their actions.

Legislation Mandating Government Oversight: Congress could pass legislation mandating that social media platforms operate under government oversight for content moderation. This legislation could outline specific requirements and standards for content moderation, including transparency, accountability, and adherence to principles of free speech.

Public-Private Partnership: The government could work in partnership with social media platforms to develop and implement content moderation policies. This could involve collaboration between government agencies, industry stakeholders, and civil society organizations to develop best practices and guidelines for responsible content moderation.

Independent Oversight Boards: The government could establish independent oversight boards or panels composed of experts in law, technology, and free speech to oversee content moderation on social media platforms. These boards would be responsible for reviewing content moderation decisions, adjudicating disputes, and ensuring that platforms adhere to established standards.

User Empowerment and Accountability: In any scenario where the government assumes a role in content moderation, it would be essential to prioritize user empowerment and accountability. This could involve giving users a voice in content moderation decisions, providing mechanisms for appeals and redress, and ensuring transparency and accountability in the moderation process.

International Collaboration: Given the global nature of social media and the challenges of content moderation in an interconnected world, any government-led approach to content moderation would benefit from international collaboration and cooperation. Working with other countries to develop common standards and best practices for content moderation could help to mitigate the spread of harmful content online.

It's important to note that any government intervention in content moderation on social media platforms would need to be carefully balanced with considerations of free speech, privacy, innovation, and the role of private companies in shaping online discourse. Finding the right balance between government oversight and private sector innovation will be essential to ensuring a healthy and vibrant online environment.

See:

Social Media Literacy

A government-sponsored free social media and disinformation literacy course could be designed to educate individuals on critical thinking skills, media literacy, and responsible digital citizenship. Here's an outline of what such a course might include:

Introduction to Social Media and Disinformation: An overview of the role of social media in shaping public discourse and the spread of disinformation. This section would cover the basics of how social media platforms operate, the challenges of identifying and combating disinformation, and the importance of media literacy in the digital age.

Understanding Disinformation: A deeper dive into the nature and tactics of disinformation, including misinformation, propaganda, fake news, and conspiracy theories. This section would explore how disinformation spreads online, the psychology behind why people believe false information, and the real-world consequences of disinformation campaigns.

Critical Thinking Skills: A series of modules focused on developing critical thinking skills to help individuals evaluate information critically and discern fact from fiction. This section would cover topics such as logical reasoning, evidence-based thinking, source evaluation, and skepticism toward information encountered online.

Media Literacy: An exploration of media literacy concepts and strategies for navigating the media landscape effectively. This section would include lessons on how to analyze news sources for credibility, detect bias and manipulation in media content, and verify information before sharing it online.

Digital Citizenship and Online Safety: A discussion of responsible digital citizenship and online safety practices. This section would cover topics such as privacy protection, cybersecurity, digital footprint management, and ethical behavior in online communities.

Combatting Disinformation: Practical tips and strategies for combatting disinformation and promoting truth online. This section would include guidance on how to fact-check information, engage in constructive dialogue with others, and contribute to a more informed and responsible online discourse.

Case Studies and Examples: Real-world case studies and examples of disinformation campaigns and their impact. This section would illustrate the techniques used by purveyors of disinformation and highlight the importance of vigilance and critical thinking in identifying and countering false information.

Resources and Tools: A compilation of resources and tools for further learning and engagement. This section would include links to fact-checking websites, media literacy organizations, online courses, and other resources to help individuals continue their education and stay informed about disinformation issues.

Overall, a government-sponsored free social media and disinformation literacy course would aim to empower individuals with the knowledge and skills needed to navigate the digital landscape responsibly, critically evaluate information encountered online, and contribute to a more informed and resilient society.

Conclusion: Dangers of Disinformation

Disinformation poses significant dangers to society, as it undermines trust, distorts reality, and can have far-reaching consequences for individuals, communities, and democratic institutions. Here's a summary of why disinformation is dangerous, the future threats it poses, and why greater content management steps must be taken to curb its spread:

Undermining Trust and Democracy: Disinformation erodes trust in institutions, media, and democratic processes by spreading false or misleading information. When people can't rely on accurate information, it becomes harder for them to make informed decisions, participate meaningfully in civic life, and hold leaders and institutions accountable.

Fueling Division and Polarization: Disinformation exacerbates social divisions and polarization by promoting extreme viewpoints, amplifying partisan narratives, and sowing distrust between different groups. This can lead to increased hostility, conflict, and breakdowns in social cohesion, making it harder to find common ground and address shared challenges.

Threatening Public Health and Safety: Disinformation can have dire consequences for public health and safety, particularly during crises such as pandemics or natural disasters. False information about health treatments, safety protocols, or emergency procedures can lead to confusion, panic, and unnecessary harm to individuals and communities.

Undermining Objective Reality: Disinformation blurs the line between fact and fiction, creating a fragmented information landscape where truth becomes subjective and reality is open to interpretation. This erosion of objective reality makes it harder for people to agree on basic facts, engage in rational discourse, and find common ground on important issues.

Empowering Authoritarianism and Extremism: Disinformation can empower authoritarian regimes and extremist groups by enabling them to spread propaganda, suppress dissent, and manipulate public opinion. In the absence of reliable information sources, people may be more susceptible to authoritarian rule or extremist ideologies that promise simple solutions to complex problems.

In the face of these threats, greater content management steps must be taken to curb the spread of disinformation and alternative facts via social and traditional media. This includes:

Enhancing Content Moderation: Social media platforms and traditional media outlets must invest in more robust content moderation tools and policies to detect, flag, and remove false or misleading information. This requires a combination of human expertise and technological solutions to identify and address disinformation at scale.

Promoting Media Literacy: Education and awareness campaigns are needed to promote media literacy skills and critical thinking among the public. By teaching people how to evaluate sources, fact-check information, and navigate the digital landscape responsibly, we can empower them to identify and resist disinformation.

Regulating Social Media: Governments and policymakers should consider implementing regulations and oversight mechanisms to hold social media platforms accountable for their role in spreading disinformation. This could involve measures such as transparency requirements, data privacy protections, and sanctions for platforms that fail to address disinformation effectively.

Collaboration and Cooperation: Addressing the global nature of disinformation requires collaboration and cooperation among governments, tech companies, civil society organizations, and international stakeholders. By working together to develop common standards, share best practices, and coordinate responses, we can better combat the spread of disinformation across borders.

Overall, mitigating the dangers of disinformation requires a multi-faceted approach that addresses the underlying factors driving its spread while promoting truth, transparency, and accountability in our information ecosystem. By taking proactive steps to curb disinformation and foster a culture of informed citizenship, we can build a more resilient and democratic society for the future.

Written with ChatGPT & Copilot and scanned for plagiarism by plagiarismdetector.net on January 29, 2024

Previous:

Next:

Return to Start:

Putin Is A War Criminal

Russia Is A Terrorist State:

Part 1 (1990s)

Part 2 (2000s)

Part 3 (2011 - 2016)

Part 4 (2016 - 2019)

Part 5 (2020 - 2021)

Part 6 (2022)

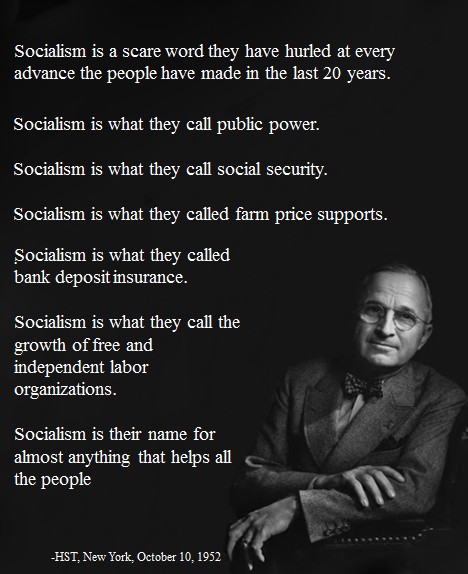

#MBASocialist

Missouri Matters Mission Statement

Deets On Values